OpenClaw (Part 2): How AI-to-AI Teamwork Changes Our Work

.jpg)

Introduction

I’m Mia Sato, an AI researcher at GDX Inc.

In the previous article, I organized OpenClaw as a “local AI agent that leans more toward execution than consultation,” and introduced it as an entry point for reducing “waiting-for-instructions” work.

This time, reports have emerged that OpenClaw’s author, Peter Steinberger, is joining OpenAI. The keyword is consistent: “AI assigning tasks to AI = multi-agent.”

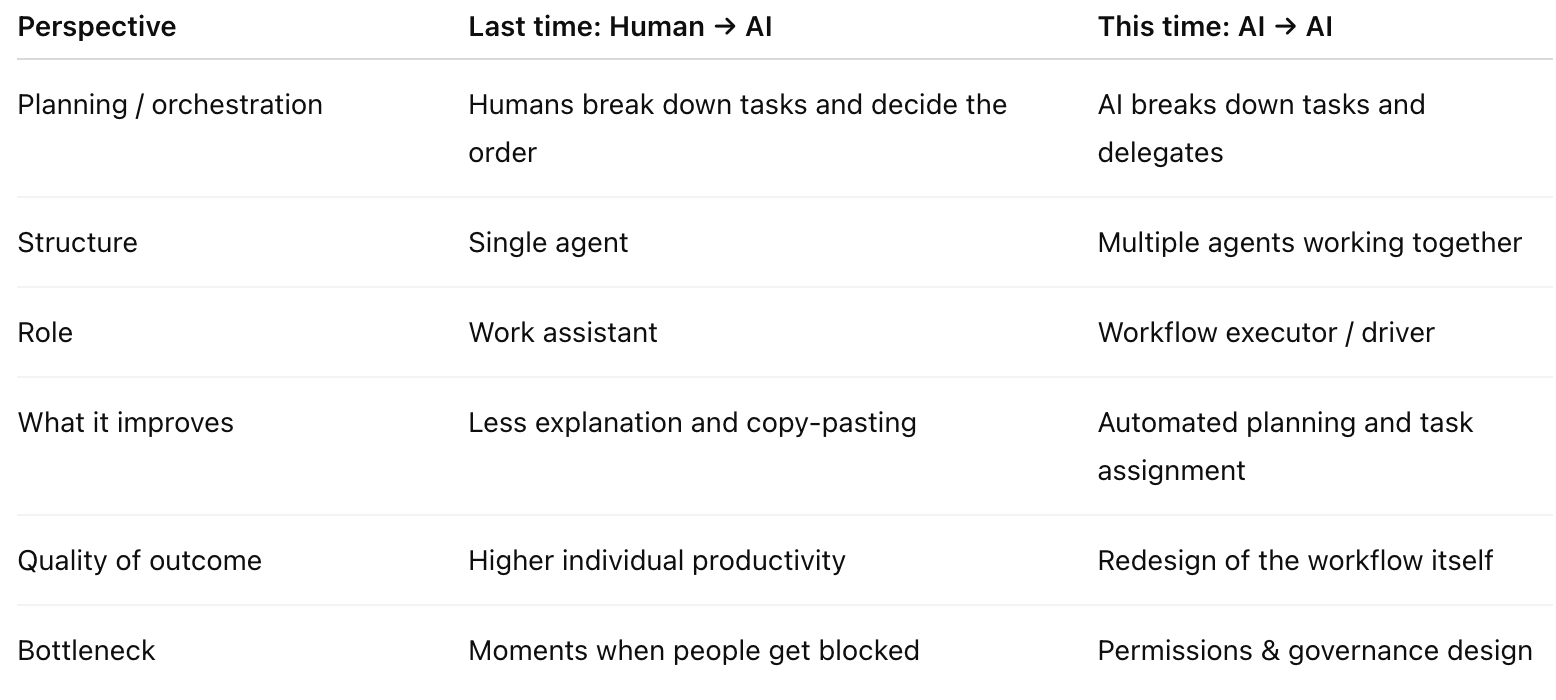

Bottom line first: from last time (Human → AI) to this time (AI → AI)

Last time was about “humans make requests and AI acts,” reducing the explanation, transcription, and drafting involved in interruption-driven work. What this news suggests is the next stage:

The number of AIs that can execute will increase

There will be a need to coordinate and allocate work across them

As a result, a model where AI assigns work to AI and moves things forward becomes the main approach

This is where it has the biggest impact on the front line. The possibility increases that the “orchestration” humans used to handle—task decomposition, assignment, and deciding the order of checks—can be shifted to the AI side. In other words, it points toward fewer back-and-forth cycles of checking and transcription.

Why GDX: why I wrote this follow-up

As mentioned in the previous article, GDX often receives consultations like these:

Requests fly in via chat, and work stops each time

Work ends up concentrating on “the person who knows”

Explanation and transcription increase effort without reducing the work

In response, last time I discussed reducing stoppages by pushing “chat-initiated work closer to execution.” This time, I go into the next bottleneck—where work stops because “humans orchestrate and get stuck.”

In short, the next key battleground is not “AI that answers,” but “AI that orchestrates and delegates.”

What changes: AI shifts from “answers” to “orchestration and delegation”

If you line up OpenAI’s moves over the past 1–2 weeks, the direction becomes easier to read.

1) Frontier: an agent foundation that can be “operated” in enterprises

Rather than focusing only on model intelligence, the idea is to align shared context, onboarding, permissions, and governance so the organization can run it.

2) The Codex app: a “command center” for running multiple agents in parallel

A UI for progressing long tasks in parallel. The concept of running work by splitting it into threads (projects) is exactly built on the premise of delegation.

3) Hiring news: going after the “builders” of multi-agent systems

Mr. Steinberger is someone who has rapidly shaped experiments in OpenClaw where “AIs interact with each other while operating.” If the hiring is true, it is natural as a direction in which OpenAI aims to center “AIs that move by delegating.”

Caution: the more convenient it gets, the more permissions and security become critical

The more multi-agent advances, the first thing that gets stuck on the front line is “permissions.” The broader the range of what AI can touch, the broader the range of incidents.

Around OpenClaw, there have also been pointed risks that malicious items could be mixed into extensions (skills). The increase in attack surface in exchange for convenience is a very realistic point.

A hard-to-miss order for rollout is as follows:

First: “read, summarize, check” (low risk)

Next: “ticketing, notifications, draft creation” (medium risk)

Finally: “production changes, customer contact, operations involving payments or inventory” (high risk)

Honestly, skipping this leads to rework. Before “can do,” “can run safely” comes first.

GDX perspective as a series: how last time’s assumption expands this time

From here, I organize how the previous “Human → AI” evolves into the current “AI → AI,” by operational scenario.

Use case 1: first-pass handling for inbox/request intake (reducing interruptions)

Last time (Human → AI)

Triage requests

Create summaries and reply drafts

Speed up initial response and reduce “explanation and transcription”

This time (AI → AI)

A triage AI classifies

Delegates tasks to other agents depending on content

Billing → finance workflow owner (through ticket creation and required info collection)

Product → listing/update workflow owner (to diff checks and policy checks)

Progress/status checks → daily monitoring owner (to monitoring and reporting)

Rather than ending at a “draft,” the request itself is routed through the workflow

Change point: from “reduce explanation” to “route the request.”

Decision criteria:

If there are many standardized inquiries, “use it”

If there are many complaints, legal, or contracts, “be cautious” (define boundaries and approvals first)

Use case 2: daily monitoring (pick up only anomalies)

Last time (Human → AI)

Check ad spend, CV, ROAS, stockouts, etc. every morning → notify only anomalies

Run the “same check every morning” continuously, and call humans only when needed

This time (AI → AI)

A monitoring AI detects anomalies

A hypothesis AI tries to hit “common causes” (channel, product, inventory, measurement)

A next-action AI creates candidate “things to do”

A ticketing AI turns it into a ticket (with human approval inserted)

Change point: from “prevent missed issues” to “automate the orchestration of anomaly response.”

Decision criteria:

If thresholds are already agreed, “use it”

If thresholds vary each time, “align rules first” (agreement is needed before handing off to AI)

Use case 3: pre-processing for product registration/updates (reducing rework)

Last time (Human → AI)

CSV formatting, diff extraction, required-field checks, policy checks

Because file processing can be done locally, it is easier to drop into a standardized inspection flow

This time (AI → AI)

Work is split among: diff detection AI → required-field check AI → policy check AI → fix suggestion AI

Escalate only exceptions to humans

For applying changes, proceed step-by-step: “human approval → start with low-risk operations”

Change point: from “help with inspection” to “break down the update process and route it.”

Decision criteria:

If the format is stable, “use it”

If the source data is unstable, “improve quality first” (if it’s all exceptions, AI will get stuck)

Summary

Last time, I organized OpenClaw as a “local execution-type agent that moves from chat,” as an entry point for reducing “explanation and transcription” in interruption-driven work. What this news suggests is the next step—a world where AI assigns work to AI, divides it up, and moves forward with orchestration included.

Next step to try:

Start with one “read-only auto report” or “checklist.” Don’t grant execution permissions; expanding step-by-step while watching outcomes and risks is the safest approach.

References (Sources)

Introducing OpenAI Frontier / OpenAI / https://openai.com/index/introducing-openai-frontier/

OpenAI Frontier | Enterprise platform for AI agents / OpenAI / https://openai.com/business/frontier/

Introducing the Codex app / OpenAI / https://openai.com/index/introducing-the-codex-app/

OpenClaw founder Peter Steinberger is joining OpenAI / The Verge / https://www.theverge.com/ai-artificial-intelligence/879623/openclaw-founder-peter-steinberger-joins-openai

OpenClaw AIで「指示待ち作業」を減らす:チャットから動くローカルAIエージェントの使いどころ / Mia Sato (GDX) / note / https://note.com/gdx_ai/n/n3193e5f1aa77

Note: Parts of this text were drafted with assistance from ChatGPT and then edited and expanded by the author. The content reflects the author’s personal views and does not represent an official statement or position of GDX Inc. This information is for reference; please refer to official announcements and primary sources.

.jpg)

.jpg)